Before you Paste that into ChatGPT

Data Sovereignty in Australian Defence Programs

In March 2023, Samsung allowed its semiconductor engineers to use ChatGPT to help with their work. Within a single month, three separate incidents of sensitive data leakage had occurred. One engineer pasted source code from a proprietary semiconductor database into the tool to debug an error. A second entered confidential code for identifying defective equipment to get optimisation suggestions. A third converted a recorded internal meeting into a document file and submitted it to generate meeting notes.

None of these engineers were acting maliciously. They were using a genuinely useful tool to do their jobs more efficiently. But because ChatGPT retains input data to train and improve the model, Samsung's proprietary information entered OpenAI's data infrastructure with no ability to retrieve or delete it. Samsung subsequently banned the use of generative AI tools across the company and began developing its own internal AI platform.

Samsung is a semiconductor company, not a defence contractor. The consequences of their incident were reputational and commercial. For Australian defence programs operating under export control obligations, classification requirements, and contractual data handling provisions, the consequences of the same behaviour would be considerably more serious.

This article is not about whether AI tools are useful in engineering environments. They are. It is about what practitioners and program managers need to understand before using them with program data in Australian defence and complex engineering contexts, where the legal, contractual, and security implications are significant and not always well understood.

This article does not constitute legal advice. The regulatory landscape governing export control, data handling, and information security in Australian defence programs is complex and program-specific. Practitioners and organisations should seek qualified legal and security advice before adopting AI tools in sensitive program contexts.

The Assumption most people are making

The Samsung incidents are not an isolated case. In 2023, research conducted by cybersecurity firm Cyberhaven found that 4.2% of employees who used AI tools had at some point submitted confidential company data into these systems, with estimates suggesting large organisations could be sharing confidential data with AI tools hundreds of times per week. This data was collected before the current wave of AI tool adoption had reached its peak. The actual rate of informal data submission to public AI tools in most organisations today is almost certainly higher. In their 2026 AI Adoption and Risk Report, their research showed that 39.7% of all AI interactions involved some form of sensitive data.

The default assumption driving this behaviour is that using an AI tool is similar to using a search engine or a word processor - a personal productivity tool that processes information locally and produces a useful output. This assumption is wrong, and acting on it in a defence context carries risk that extends well beyond the commercial and reputational consequences Samsung faced.

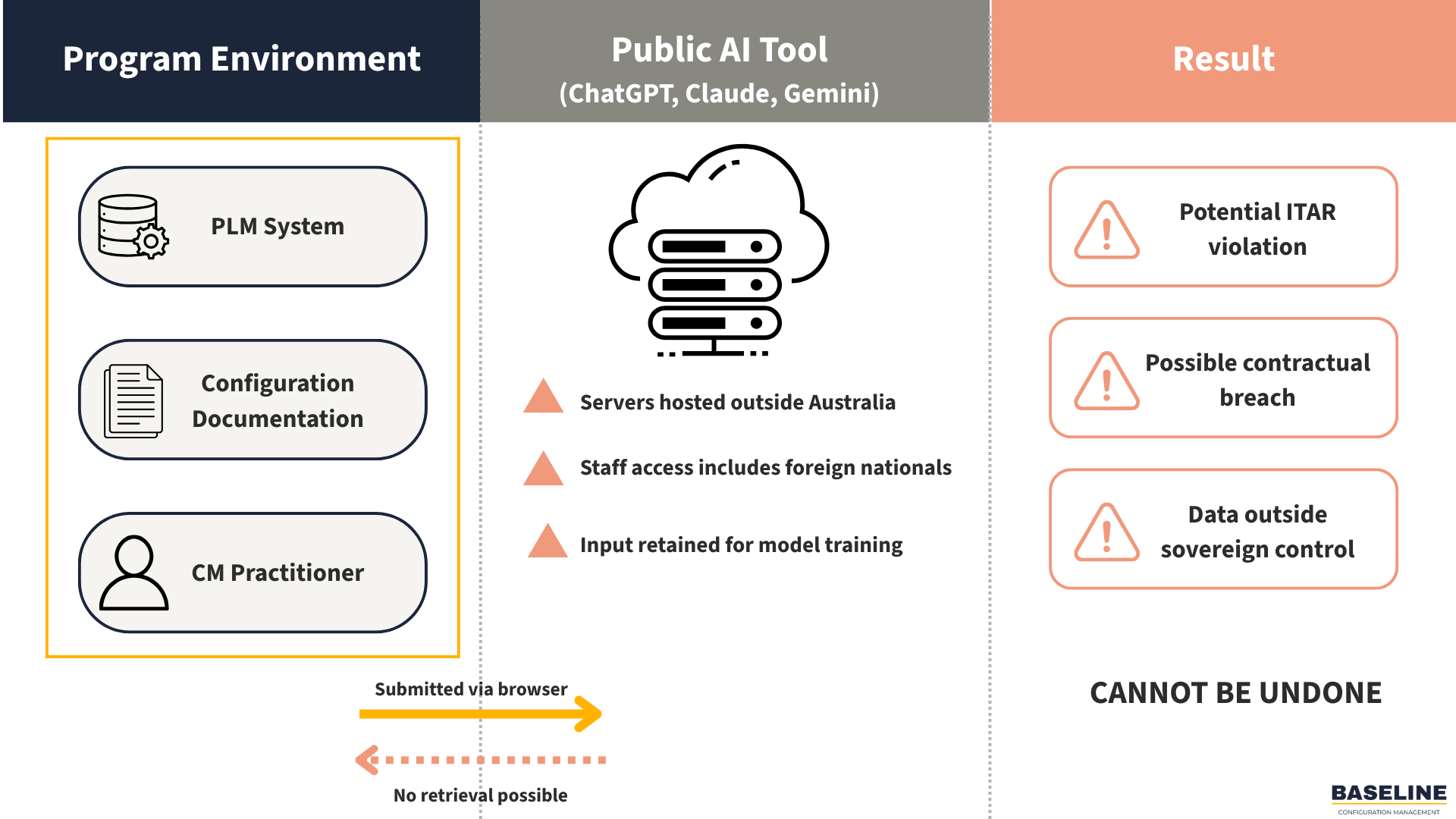

When content is submitted to a public-facing AI tool, it leaves the program environment. It travels to the tool provider's infrastructure, which is typically hosted in data centres outside Australia, operated under foreign law, and subject to access by the tool provider's staff and systems. Depending on the tool's terms of service, that content may be retained, used to improve the model, or accessed for purposes the user did not intend and cannot control.

For general productivity tasks involving non-sensitive information, this is an acceptable trade-off. For program data in Australian defence contexts, it frequently is not.

Figure 1: The moment program data is submitted to a public AI tool, it leaves the controlled program environment. In defence contexts, this transmission may constitute an unauthorised export under ITAR or Australian export control legislation.

ITAR: A Costly Mistake

The International Traffic in Arms Regulations (ITAR) is a United States export control regime governing the transfer of defence related technology and technical data. It applies to Australian defence programs that involve US-origin controlled technology, which includes the majority of significant Australian defence acquisition programs.

Under ITAR, controlled technical data cannot be transmitted to foreign nationals or processed on systems outside the authorised technology control plan without an explicit licence or exemption. The definition of transmission is broad, but it includes electronic submission to a foreign-hosted system.

A public AI tool operated by a US company processes data on infrastructure that may be physically located in any number of countries and is legally subject to US law regardless of where the data sits. Submitting ITAR-controlled technical data to such a tool - an interface definition, a system specification, a change request that references controlled technology - may constitute an unauthorised export under ITAR regardless of intent.

There is a further dimension that is less widely understood: the deemed export rule. Under ITAR, allowing a foreign national to access controlled technical data, regardless of where they are physically located, constitutes an export requiring a licence. The engineering teams, operations staff, and infrastructure personnel who maintain commercial AI platforms almost certainly include non-US persons. This means that even a nominally US-hosted AI tool creates ITAR exposure through the deemed export mechanism, independent of where the data is physically stored.

The scale of ITAR enforcement consequences isn’t theoretical. Boeing agreed to pay a $51 million penalty for ITAR violations involving unauthorised exports and retransfer of defence technical data, including sensitive information about the F-18, F-15, F-22, and AH-64 Apache platforms. The violations - 199 separate charges over five years -involved allowing employees at overseas partner companies to access ITAR-controlled technical data through Boeing's digital repository. The mechanism is directly analogous to submitting controlled technical data to a public AI tool: unauthorised digital access by parties outside the approved technology control framework.

ITAR enforcement is extraterritorial. The US Department of State can and does pursue enforcement action against non-US entities for ITAR violations. Civil penalties reach up to $1 million per violation. Criminal penalties include up to 20 years imprisonment. Australian contractors operating under ITAR-controlled contracts have Technology Control Plans in place precisely to prevent uncontrolled transmission of this kind.

The practical guidance is unambiguous: ITAR-controlled program data must not be submitted to public AI tools under any circumstances. If there is any uncertainty about whether specific content is ITAR-controlled, treat it as controlled until a qualified export control specialist confirms otherwise.

Australian Export Controls and Defence Security Controls

Australia operates its own export control regime under the Defence Export Controls framework administered by the Department of Defence, supported by the Customs Act and associated regulations. The Defence and Strategic Goods List specifies the technology and goods subject to Australian export controls, which broadly align with but are not identical to international control regimes including the Wassenaar Arrangement.

Australian export control obligations apply to the transmission of controlled technology to foreign entities, including through electronic means. The same analysis that applies to ITAR applies here - submitting controlled technical data to a foreign-hosted AI tool may constitute an export requiring authorisation under Australian law.

Programs operating under the Defence Industry Security Program have specific information security obligations that include controls over electronic transmission of sensitive program information. AI tool adoption that has not been assessed against DISP requirements may represent a compliance gap with consequences for the organisation's DISP membership and facility clearance status.

Data Residency and the Sovereign Cloud Question

Beyond export control, there is a broader data sovereignty consideration that applies to sensitive but unclassified program information that does not trigger specific export control obligations.

Data processed by a foreign-headquartered AI provider is subject to the laws of the country in which that provider operates. The United States CLOUD Act allows US law enforcement to compel US companies to produce data held anywhere in the world, including data belonging to foreign customers. For Australian defence programs, sensitive program data processed through a US-headquartered AI tool is potentially accessible to US government agencies under US law - regardless of the sensitivity classification of the data or the intentions of the tool provider.

This may be an acceptable risk in some contexts and an unacceptable one in others. The critical point is that it needs to be a conscious governance decision, not an unconsidered consequence of informal tool adoption.

The Australian government's Hosting Certification Framework identifies cloud services that meet Australian government data sovereignty requirements. AI tools and platforms offered by certified providers, with contractual data residency and sovereignty guarantees, are the appropriate infrastructure for sensitive program data. The Defence Strategic Review and subsequent policy direction have accelerated investment in sovereign digital capability — this is the direction the industry is moving, and practitioners and program managers should understand the framework well enough to make informed decisions about which tools sit within it and which do not.

Classification: The non-negotiable Boundary

Classified information, at any classification level under the Australian Government Security Classification System, must not be processed on systems that are not accredited to handle that classification level. Public AI tools are not accredited. This boundary is absolute and shouldn’t require extended elaboration.

The practical challenge is that practitioners working in programs with classified elements often work across classified and unclassified environments, and the sensitivity of specific content is not always obvious in isolation. A configuration item that appears benign may be sensitive in the context of a classified program. An interface description that references a classified system capability may carry sensitivity regardless of the document it appears in.

The question to answer before submitting any program content to an AI tool is: can I clearly demonstrate that this content is authorised for processing on a non-accredited commercial platform? If the answer is uncertain, the content stays out of the tool.

The Aggregate Sensitivity Problem

Individual pieces of program data may appear innocuous in isolation. A part number, a system name, a subsystem description, a change request narrative - none of these is necessarily sensitive on its own. Together, they can form a picture of a program's architecture, capabilities, and design decisions that is considerably more sensitive than any individual document.

An AI tool used over time to process configuration data from a program accumulates context. If that tool's data is accessible to the provider, retained beyond the session, or inadvertently disclosed through a security incident, the aggregate picture represents a program sensitivity that was never explicitly authorised for external disclosure. Document-by-document sensitivity assessments do not capture this risk. The sum is more sensitive than the parts.

This isn’t just a hypothetical concern. The Samsung incidents illustrate that even when each individual query appears routine, the cumulative effect is the transfer of a meaningful picture of proprietary operations to an external platform. In a defence context, the equivalent transfer would represent a far more serious exposure.

The appropriate response is to treat AI tools used with program data as program systems in their own right, subject to the same information security governance as any other system that touches sensitive information.

Intellectual Property and Commercial Sensitivity

Not all data sovereignty risk sits in the export control or classification domain. Configuration documentation, design data, supplier specifications, manufacturing processes, and cost structures frequently represent contractor intellectual property with significant commercial value, separate from any regulatory classification.

Many defence contracts include explicit clauses governing the handling of program-sensitive information. Using a public AI tool to process that information without authorisation may constitute a breach of those clauses regardless of intent, with contractual consequences independent of any regulatory liability.

The practical implication is that the question "is this authorised for processing on a public AI tool?" has both a regulatory dimension and a contractual one. Organisations that have not explicitly addressed AI tool use in their data handling policies are operating with an ambiguity that will not resolve itself in their favour if an incident occurs.

What Program Managers and practitioners should do now

For program managers, the immediate priority is establishing a clear organisational position on AI tool use before the informal adoption that is already occurring creates a compliance problem. This does not require prohibiting AI tools, it requires defining which tools are authorised for which data types under which conditions, and communicating that clearly to program teams. The Samsung response - a blanket ban followed by eventual adoption of a governed internal alternative - is one model. A tiered authorisation framework that permits public AI tools for non-sensitive work while restricting them from program-specific data is another, and is arguably more sustainable.

For CM practitioners specifically, the data governance dimension of AI tool adoption sits squarely within the configuration management function. CM is the discipline responsible for ensuring that configuration information is accurate, controlled, and traceable, and AI tools that process configuration data without governance controls create risks to all three. Practitioners who understand the program's data landscape, its export control obligations, and its contractual data handling requirements are well positioned to contribute to the governance framework that responsible AI adoption requires.

For both, the underlying principle is the same one that governs configuration management more broadly: the convenience of an informal workaround is never worth the governance risk it creates. AI tools are genuinely useful. They are not useful enough to justify an ITAR violation, a security incident, or a breach of contractual obligations that underpin the program's relationship with its customer and its partners.

The organisations and practitioners who will use AI tools most effectively in defence program contexts are not those who adopt them most enthusiastically or most cautiously. They are those who govern them most deliberately - understanding what the tools can do, what the data handling implications are, and what governance framework is required to use them responsibly.

References & Further Reading

Samsung ChatGPT Data Leak | https://gizmodo.com/chatgpt-ai-samsung-employees-leak-data-1850307376

Boeing ITAR Settlement | https://www.defenceconnect.com.au/industry/13714-us-issues-51-million-penalty-to-boeing-over-unauthorised-exports-data-transfers

Cyberhaven AI Company Data Usage | https://www.cyberhaven.com/blog/4-2-of-workers-have-pasted-company-data-into-chatgpt

Cyberhaven 2026 AI Adoption and Risk Report | https://www.cyberhaven.com/resources/report/ai-adoption-risk-report-2026

Defence and Strategic Goods List | https://www.defence.gov.au/business-industry/exporting/export-controls-framework/defence-strategic-goods-list

Defence Industry Security Program | https://www.defence.gov.au/business-industry/industry-governance/industry-regulators/defence-industry-security-program

Australian Government Hosting Certification Framework | dta.gov.au

ITAR — Directorate of Defense Trade Controls | https://www.pmddtc.state.gov/ddtc_public

Wassenaar Arrangement | https://www.wassenaar.org/

Australian Government Protective Security Policy Framework (PSPF) | https://www.protectivesecurity.gov.au/pspf-annual-release

Take your CM Practice Further

Understanding where the governance boundaries sit is only part of the challenge. Building the judgement, organisational awareness, and practical capability to apply that understanding in difficult program conditions is the other. The CM Practitioner's Handbook covers the full landscape of Configuration Management practice - from baselines and change control to toolchain governance, organisational navigation, and a structured CM health check framework. Written for practitioners working in complex engineering environments, it addresses what the standards do not. Available now at baselinecm.com.au/products — $59 AUD launch price.

About Baseline: Baseline exists to professionalise Configuration Management and make its value explicit. Not as an afterthought, and not as a clerical function, but as a strategic capability that underpins delivery confidence.